Bombs, Brains, and Science: Would you rather lose 10% of your researchers or 10% of your labs?

In most Engineering Innovation posts, I try to provide some new, evidence-based argument on how to improve the innovation pipeline. The posts try to bring readers on the journey through the academic evidence and relevant history that informs the argument.

But this post will be different.

In this post, I won’t be taking a stand at all. The purpose of this post is to tell you about a paper that’s just plain cool—and deserves to be far more well-known.

The main reason I won’t be making any kind of argument is that, frankly, this paper was full of astonishing details that I have not quite been able to wrap my mind around just yet. But I’m sure the details will inform your thinking and possibly even spawn some great pieces from subscribers making sense of what I couldn’t.

I’ll share both the main findings of the paper and a host of other details that paint a compelling picture of just how different this previous era of science was than today.

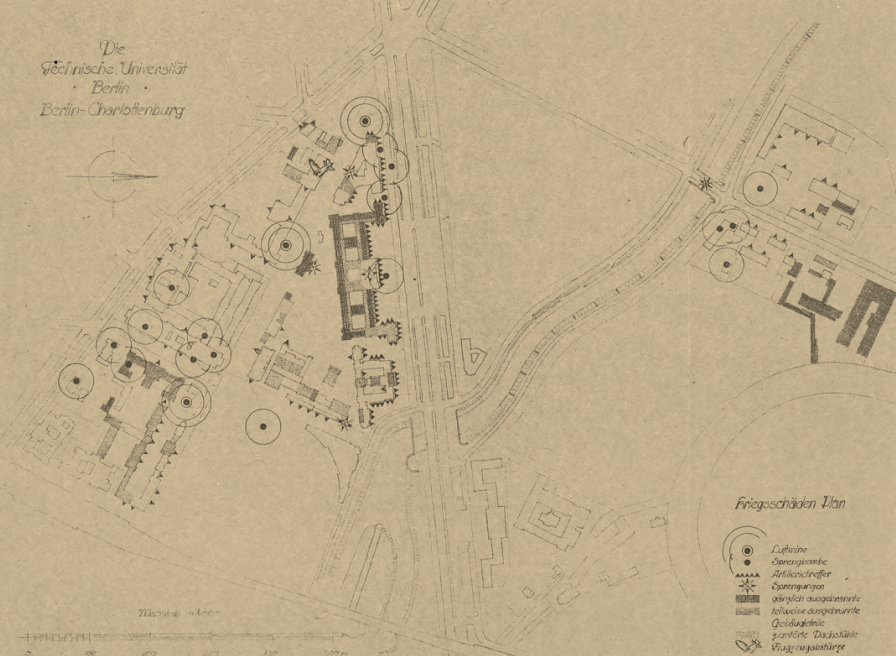

The paper, Bombs, Brains, and Science, is a wonderful work of economic history in which the University of Munich economist Fabian Waldinger attempts to understand whether the dismissal of Jewish scientists at German Universities in the 1930s hurt departmental output more than Allied bombers dropping actual bombs on a department’s buildings.

The answer is not so obvious. Would you rather have 10% of your department’s buildings and lab space disappear tomorrow or have 10% of your researchers disappear?

Let’s find out.

The Shocks

I’ll start by diving into what exactly the two shocks were that provided Waldinger his ‘natural experiments’ to measure.

Dismissals

On April 7, 1933, the newly empowered National Socialist Party of Germany passed the Law for the Restoration of the Professional Civil Service. Jews and other ‘politically unreliable’ individuals were to be dismissed from the civil service, effective immediately. Any scientist with at least one Jewish grandparent was summarily dismissed from their academic position. Those with opposing political views, such as having attended one too many meetings supporting the communist movement, also found themselves out of a job.

There were some exemptions at the time. For example, if you were Jewish but had a close family member who died in World War I, then you got to keep your job.

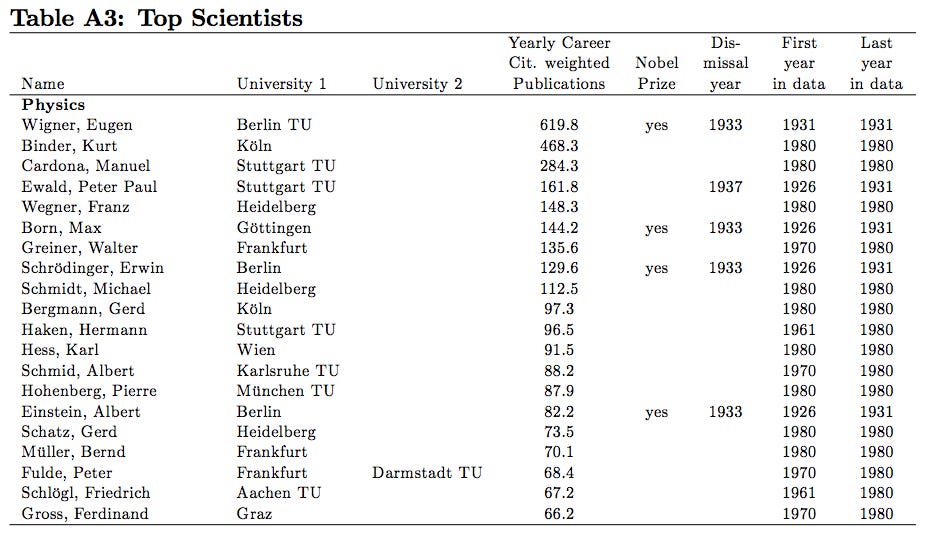

Regardless, even those remarkably specific exemptions were revoked in 1935 and, in the end, over 1,000 academics were dismissed from German Universities. This included 15% of physicists, 14% of chemists, and almost 19% of mathematicians. And these were talented researchers at that:

Many dismissed scientists were outstanding members of their profession. They published more top journal papers and received more citations than average scientists. While 15.0% of physicists were dismissed, they published 23.8% of top journal papers before 1933, and received 64% of the citations to papers published before 1933. In chemistry, 14.1% were dismissed but wrote 22.0% of top journal articles and received 23.4% of citations. In mathematics, 18.7% were dismissed but wrote 31.0% of top journal papers and received 61.3% of citations.

In general, the dismissals were shockingly uncorrelated with many things you’d expect them to be correlated with, such as the number of ardent Nazis in a department. And, once the policy was carried out, the dismissals affected the departments to varying degrees. Some departments lost no researchers and some lost as many as 60% of their professors.

All of that is to say, a large sample of academics was dismissed all at once. And the negative effects were noticed by many at the time.

In the late 1930s, a high-ranking Nazi official asked David Hilbert, mathematical god of the early 1900s who had built Göttingen into the math capital of the world, about the state of mathematics in Göttingen. The official was curious to know how it was going now that the department had been “freed of the Jewish influence.” Hilbert is said to have replied, “There is no mathematics in Göttingen anymore.”

Bombings

In 1939, the UK’s Royal Air Force (RAF) began their bombing campaign against the Germans. In the beginning, these were quite concentrated bombings on military targets such as fleets of German ships. As Germany invaded more Central European countries in 1940, the RAF strategic bombing targets expanded to oil reservoirs, rail lines, aircraft factories, U-boat shipyards, and more.

To avoid heavy casualties from German antiaircraft defense, the RAF flew most of their missions at night. But this tactic had a tradeoff; it’s hard to be accurate when you can’t see what you’re trying to hit...which tends to be important in ‘targeted’ bombing.

In the RAFs defense, airplanes were still quite new as a warfare technology and effective tactics and navigation technology were both works in progress. Partially in response to RAF planes not being able to hit the broad side of a barn, the RAF began to aim at much bigger targets: entire cities. These ‘area attacks’ were meant to affect the morale of the German people. And, while the goal was to concentrate damage on town centers, even that was an overly ambitious goal. Only about 20% of the bombers managed to navigate within 5 miles of their destination. So, even whole towns were often missed. An October 1, 1941 bombing raid or Karlsruhe and Stuttgart hit not only those two target cities, but an additional 25 cities as well, some of them up to several hundred kilometers away. To call some of these bombing raids a “spray and pray” approach would be an understatement.

All of that is to say: whether or not a university was hit by a bomb was more or less random.

And all of this quasi-random bombing only increased as the war went on. In 1942, the British issued a directive to intensify the area bombing campaign. Arthur “Bomber” Harris, who has one of the sickest and most apt military nicknames in modern history, took over the job with gusto.

We are going to scourge the Third Reich from end to end. We are bombing Germany city by city and ever more terribly in order to make it impossible for her to go on with the war. That is our object; we shall pursue it relentlessly. - “Bomber” Harris

“Bomber” Harris was a man of his word and quickly proved worthy of his nickname. On May 30, 1942, the RAF flew a 1,000 bomber attack over Cologne, a city that had been by at most 40 planes at once in the 107 attacks that preceded this one. The raid damaged about a third of Cologne’s surface area in total. Massive surface area damage like this was made much easier for the RAF with the newfound availability of incendiary bombs which started large fires in densely packed cities.

In total, Allied bombings completely destroyed about 18.5% of German homes. Inner-city homes represented an inordinately high chunk of these homes since inner-cities were the very rough target of these campaigns. Coincidentally, inner-cities also are where a high share of universities are located.

All of that is to say: there was a large sample of university research buildings that were partially or fully destroyed by Allied bombs without much rhyme or reason.

The Main Question

Would you rather lose 10% of your researchers or 10% of your labs?

Now, getting your academic building partially blown to shreds is obviously bad for departmental productivity. But, so is having up to 15% of your professors fired for something as arbitrary as having a Jewish grandparent or attending a communist party meeting. And, while it’s obvious that both of these will at least temporarily decrease departmental output, it is not clear at all which is worse, either in the short run or in the long run.

Comparing the Setbacks: Bombs vs. Brains

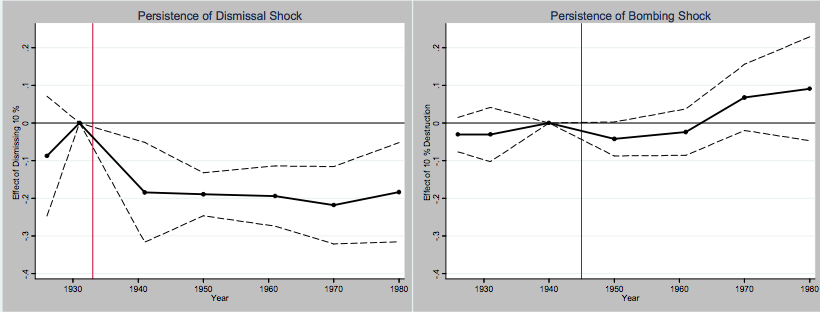

In the short-run

In the short run, a 10% shock to human capital—dismissing 10% of a department’s scientists—reduced departmental output by .2 standard deviations. A 10% shock to physical capital—the destruction of 10% of a department’s buildings—lowered output by .05 standard deviations. The effects of losing 10% of your researchers was 4X that of losing 10% of your buildings in the short-run.

In the long-run

In the long-run, the effects of dismissing researchers persisted. Departments continued to underperform up through 1980, when the data for the study stops. Meanwhile, by 1970, departments that were bombed were experiencing increased productivity, meaning that bombed departments may have benefited from upgrading during postwar reconstruction.

Below, you can see the persistent effects of the dismissals compared to the exact opposite long-run effects in the case of the bombing shock.

Waldinger elaborates on the massive long-run impacts of the shocks:

My estimates indicate that the dismissals of scientists reduced output in affected German and Austrian science departments between 1933 and 1980 by 9,576 top journal publications, a reduction of about 33.5%. Output, as measured by citation-weighted publications, declined by 191,920 (34.6%) citations as a result of the dismissals. In the same time period, dismissed scientists produced 1,181 top journal publications receiving 32,369 citations. These results indicate that German science lost much more than the publications of the dismissed scientists because the reduction in output in departments with dismissals persisted at least until 1980. WWII bombings of German and Austrian science departments reduced output of affected departments between 1944 and 1980 by 1,028 top journal publications, a fall of about 5.7%; citation-weighted publications declined by 22,194 (6.4%). These calculations suggest that the dismissal of scientists in Nazi Germany contributed about nine times more to the decline of German science than physical destruction during WWII.

To repeat: the dismissal of scientists in Nazi Germany contributed NINE TIMES MORE to the decrease in scientific output in Germany than did the destruction of a large percentage of its research buildings.

That is a massive number considering the percentage of research buildings that were bombed is likely in a similar to the percentage of academics dismissed.

What seems to be driving this trend?

Surprisingly, the career trajectories of the scientists that stayed in the German departments did not seem affected at all. The reason the dismissal of their colleagues seemed to cause a persistent drop in quality that lasted decades was the inability of the universities to recruit at the high levels they had been previously.

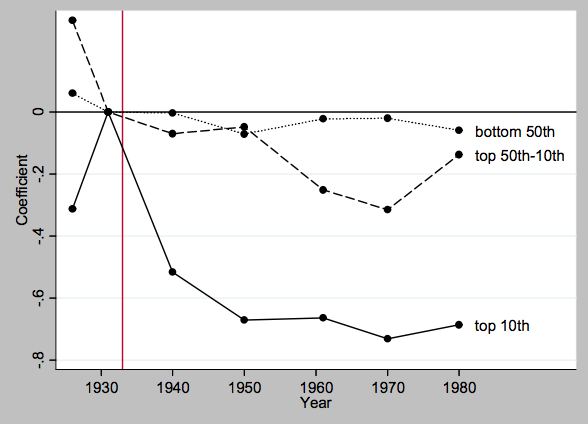

The negative effects were particularly pronounced in places that lost superstars. The data include eleven dismissed Nobel laureates such as physicists Albert Einstein and Max Born and chemists Fritz Haber and Otto Meyerhof. When Waldinger explores this phenomenon he finds that, in the long-run, the loss of a scientist in the top 5th percentile, for example, reduced output by between 0.7 and 1.6 standard deviations, compared to the 0.2 standard deviation reduction in output associated with the loss of an average scientist.

The following graph demonstrates the varying negative effects on department output of a losing a below-average (bottom 50th), above average but not top 10% (top 50th-10th), and top 10% (top 10th) scientist.

Talent draws talent. Or, as the kids say, real recognizes real.

In the short run, departments lost out because they lost some of their best researchers. And in the long-run, they lost out because, without their best researchers, they could not recruit the best young researchers. But, in situations like that of Göttingen, it’s not that researchers like Hilbert weren’t rendered useless by the dismissal of their elite colleagues. They just missed their friends.

But these friends went elsewhere, and seemingly continued to do well.

Other interesting graphics and details

The seemingly zero-sum nature of all of this

In all of this, what is so shocking to me is the seemingly zero-sum nature of what happened. Everything about what we might be told to expect from something like this did not happen. There was no temporary scientific dark age in which German scientists became useless because their best collaborators were exiled.

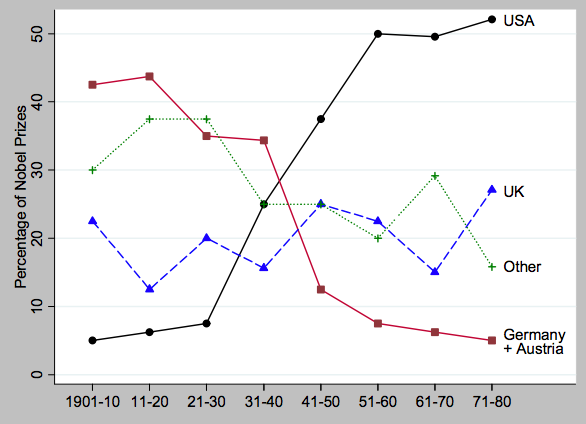

Science as a community did not suffer nearly as much as you might expect. Göttingen died. But a new mathematical center of the universe had been born. Princeton University and the Institute for Advanced Study (IAS) were more than happy to soak up all of the politically undesirable European gods of the academic world that needed a place to go. People like Einstein, John Von Neumann, and Eugene Wigner would make a home there. The accents of these emigrés were so prominent in Princeton that these Central European accents speaking English came to be known around Princeton as “Fine Hall English”, the name of Princeton’s math department building and the initial home of IAS.

Promising young math, physics, and chemistry graduate students from the US like Robert Oppenheimer and Karl Compton would never again go to Germany to learn from the best in the world. American academia now held that crown, in no small part due to the wartime European emigrés. You can clearly see this trend play out in the following graph of Nobel Prize frequency by country.

Partially Göttingen trained, Oppenheimer and Compton would run the next generation of Göttingens as Director of Princeton’s IAS and as president of MIT, fast-growing into a post-war applied and basic research powerhouse—fully growing out of its former technical school routes.

The immense talent density of early 1900s science

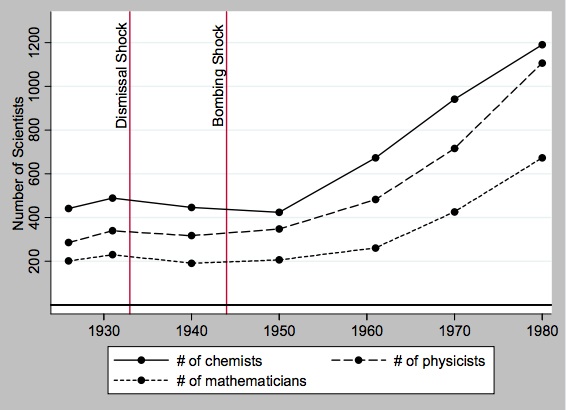

In 1931, there were under 400 physics professors in all of Germany. That includes any position from privatdozents through full professors. I have no clue what the total number is now, but, like the US, I’m sure the number is massive compared to that now. Just doing a headcount on the University of Munich’s website, I see at least 70 physicists in that department alone. And there are hundreds of other German universities, not to mention the massive Max Planck Institute.

And it’s not like the German population is drastically different today than it was 90 years ago. The population has only grown from about 67 million to 83 million in that time period.

But...my god was that small group of professors talent-dense. Take a look at the table below where Waldinger lists out some of the most cited scientists in the data. Eugene Wigner, Max Born, Erwin Schrödinger, and Albert Einstein were all active researchers in this period. Those four scientists that I just listed, not just Nobel Prize winners but all-time greats amongst Nobel Prize winners, were more than 1% of Germany’s physicists at the time!

And it was known that they were great in their own time, while they were still young and in the middle of their careers.

A list of the top 1% of US physicists today, the current scientific powerhouse, would be so underwhelming in comparison that it might border on embarrassing. The list would generally be made up of physicists whose careers, while being very productive with useful findings, are comparatively extremely modest compared to the four scientists above.

The rapid increase in scientists and scientific funding

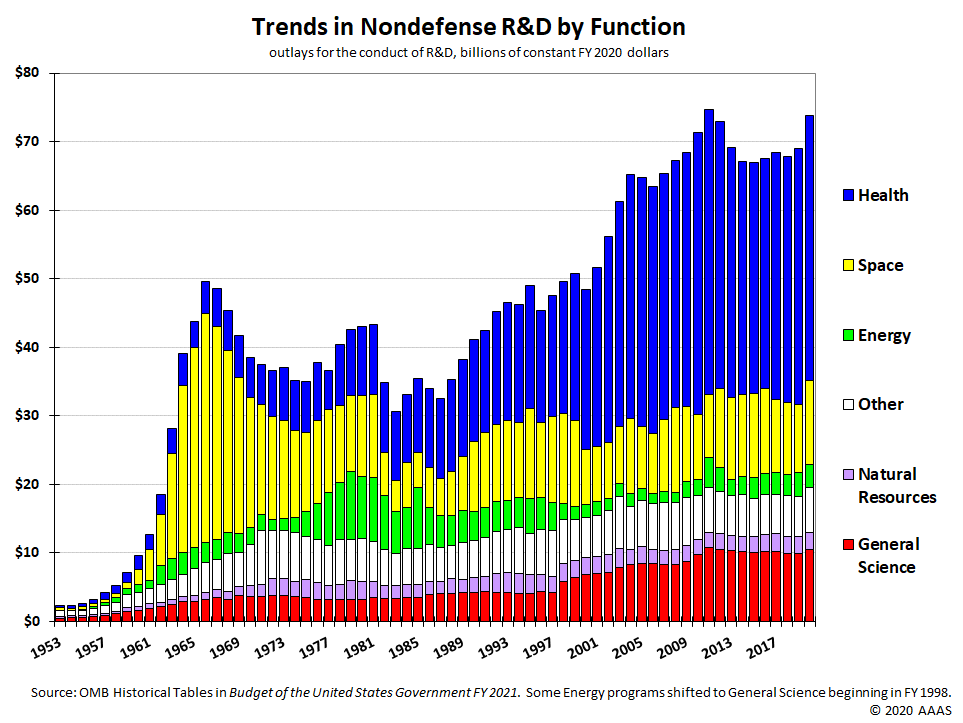

In the first Engineering Innovation post, I shared a graphic demonstrating America’s pronounced and continued increase in public spending on research starting in the post-war years.

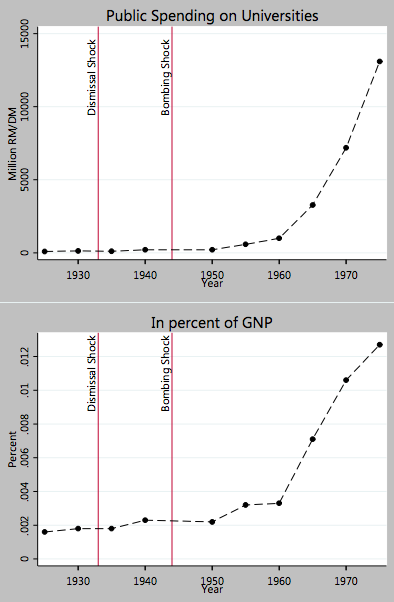

To gain some perspective, it turns out a similar thing happened in Germany. I’d never looked into whether or not this was a primarily US phenomenon, or if it happened in other places than in the US as well. That was before I came across this remarkably similar graphic in Waldinger’s appendix.

The number of scientists also began to rise precipitously. So they, like us, seem to have grown much less talent dense since Germans also seem to have fewer and fewer obvious generational talents despite this rapid increase in scientists per capita.

As the comparative politics folks say, “he who knows one country, knows none.” So, if you only knew about these trends in the US context before, hopefully this information provides a useful second data point to put things in context in the future.

The post-war years sparked the beginning of decades of increased investment in science even in a post-war Germany that was in the midst of economic recovery from the Nazi era.

Science was far cheaper

I’ve been thinking about the massive sticker price of modern scientific research for about a month now. And I’m hoping to write a piece on this topic soon assessing whether this is just a natural cost of doing science or if it is a symptom of something else. (My thoughts are not organized enough to say any more about that now.)

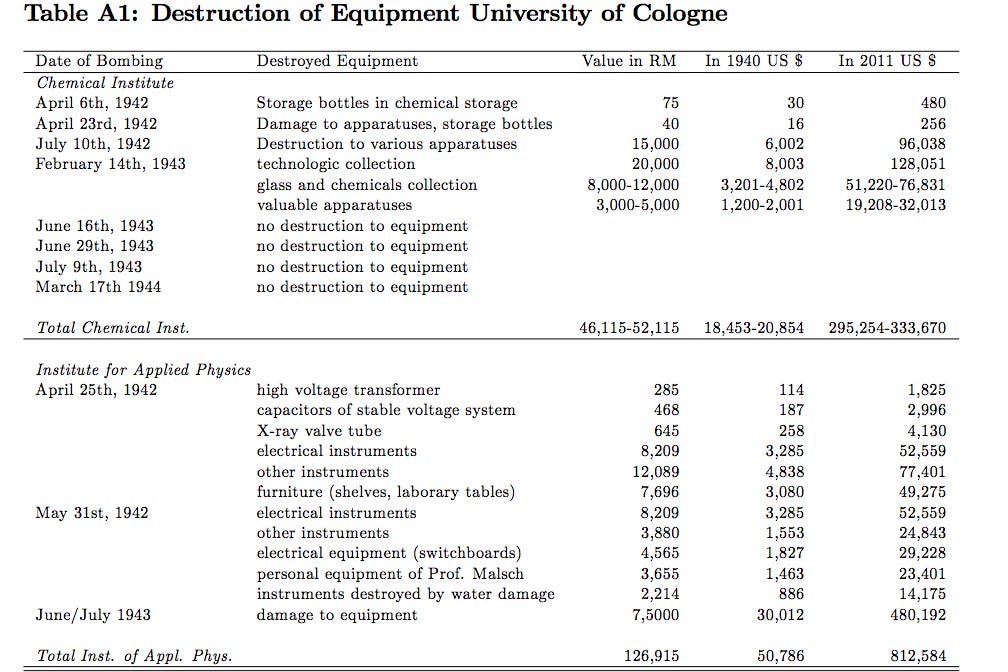

I knew from my reading of various biographies that the cost of doing even complex, experimental science was much cheaper in the previous era, but Waldinger’s paper was the first time I saw actual numbers demonstrating just how absurdly cheap things were in comparison to today.

The following table shows the total damage claims for the University of Cologne’s modestly sized Chemical Institute and Institute of Applied Physics which were severely damaged in an RAF raid. The TOTAL DAMAGES to the Chemical Institute were only $300,000 in 2011 dollars. And the damages to the Institute of Applied Physics were only a shade over $800,000, in 2011 dollars.

While these Cologne labs might not exactly have been powerhouses, there are plenty of individual pieces of lab equipment that cost far more than $800,000 now! A university president might have a small heart attack if they learned that they somehow had to find the funds to rebuild and re-stock even a third of a single research building nowadays.

Aging and output

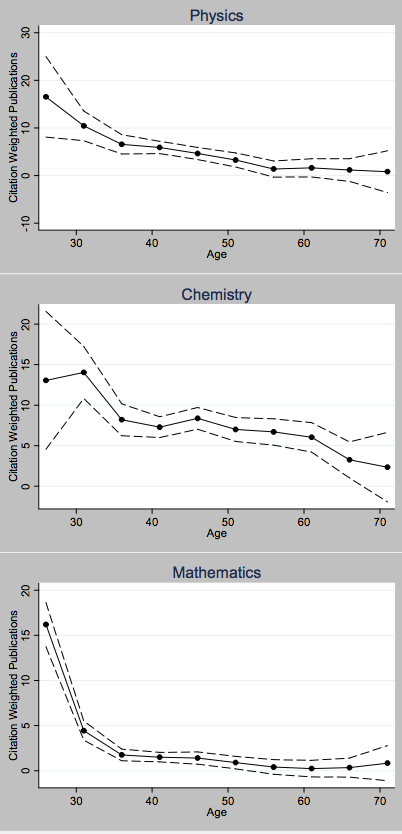

Many are aware of the fact that many mathematicians are terrified of turning 30. They are very aware that very few have ever made their best contributions to the field past the age of 30. But I’d never seen this relationship graphed out, let alone compared to other adjacent fields like physics and chemistry.

The following graphic, showing the average citation weighted publications for the three fields by age from Waldinger’s data, demonstrates that the mathematicians were right to be afraid. While the average physicist and chemist seem to be at least half as productive at the age of 40 as they were at their peak, mathematicians are borderline useless by 40. They only seemed to be about 1/8th as productive at that point.

I’d be curious to know if the trend is as bad now as it was in the generation of mathematicians in Waldinger’s data. On the bright side, it can’t be much worse.

The Results Across Departments

Waldinger, at one point, separated the data by discipline to see whether the physical capital shocks had differing effects across disciplines. For example, one might expect a physical capital shock to be far more devastating to a chemist who is dependent on lab work than a mathematician who can simply use chalk and a blackboard.

However, the estimates were more or less indistinguishable across disciplines. Looking at the tables in the appendix of the paper, I’d say that it is distinctly possible that reducing the sample size of the dataset to control for discipline might have had something to do with this, and that a larger dataset with more universities might have shown more differentiated results.

Regardless, it is likely says something about how surprisingly close the coefficients for each discipline actually are that cutting a fairly large dataset in thirds might make it too difficult to distinguish the effect of a bombing on one discipline’s researchers from another’s.

A Concluding Thought

The academics in departments that got bombed were, in general, still able to produce science that was remarkably close to their peers who had not been bombed. I’m not sure our modern scientific ecosystem would perform anywhere near this admirably under this kind of adversity.

Is this a problem that needs to be fixed? Or just part of science evolving and growing?

IMHO we should aspire to spend larger chunks of our research grant money less lavishly, doing more basic research, and a higher number of projects. But this is a thought I’m still exploring and would love to know the readers’ thoughts on the topic.

Hope you enjoyed this week’s post:) Please reach out on Twitter with any questions or thoughts. Until next time.

Science and art belong to the whole world, and before them vanish the barriers of nationality. - Goethe

(This is a cross-post. Originally published on my Substack which is linked.)

Nice post. Yes, Fabian's paper is brilliant. IIRC, in a separate paper, he uses the same shock to study peer effects and finds no impact, which seems completely robust but simultaneously bizarre.

I'm supposed to be studying for my final qualifying exams but talk of physical capital is too enticing...

A couple of related papers you may find interesting:

I'm in the early stages of working on some ideas around the importance of physical capital. It would be good to chat at some point.

This first paper, by B&G, is such a fascinating piece of data collection work. You're absolutely right. Do you have any rough guesses on how much of the issue is building a course of research on niche capital itself vs. the kind of person who does that kind of thing. I'm sure they both have an effect. I ask because I would be not shocked if the hypothesis, "People usually only pursue a course of research that requires specialized equipment if they are extremely dedicated to that problem over all others/that is an area of clear comparative advantage to them and they don't believe they can contribute as much to other areas."

That might be mere conjecture though and I'm not one to lend too much credibility to personal hunches without evidence. Do you think there's any work that can help us think through that question? Even if tangentially. As much as it can feel like it sometimes, a paper does not exist for everything.

In the chart "Trends in Nondefense R&D by Function", I just can't understand why we spend so little on energy research. We spend less on energy than on space. It just seems illogical to me.

Interesting paper and great writeup.

One thing I wonder about: how much of the “brains” setback was due to it being from an evil, self-imposed policy? That is, the bombing was a sort of random, external factor. But the expulsion of the Jews was a conscious policy from the regime. If the scientists had randomly died of disease or something, instead of being deliberately kicked out, would the effect have been similar?

Hmmmmm this is particularly interesting because, if the setback was really a recruiting problem, it breaks the problem down in a way I hadn't thought about. Because when most people deal with this question they treat it as kind of a "are there currently good people there? Yes or no?" But your question implies a different formulation.

Not just "are there good people at the department right now?" But also, "how likely is that department to treat good people well/retain them if they do good work?"

This is quite interesting. Because if we could start to find some rough answers to how important the expectations piece is, that could possibly shed some light on how to:

I'm sure we all know people who gave up possible top-flight academic careers for the private sector not just because of the paycheck, but also because they didn't really have any faith in the academic institutions/ecosystem as a whole treating them well.

Would love to know anyone's thoughts or if there are interesting papers to start running down this rabbit hole on!

"If the scientists had randomly died of disease or something, instead of being deliberately kicked out, would the effect have been similar?"

This paper by Pierre, Josh, and Wang does exactly that. They look at the sudden death of 'superstar academics' and find a noticeable decline in their collaborators productivity.

When I was considering that line of reasoning that you just made, I wasn't sure how seriously to take the change because it was unclear to me if that was a negative spillover that affected their capacity to do or work just that the field moved on in the absence of a superstar.

Because in Pierre's (god I love him, he's a godsend) Does Science Advance One Funeral at a Time? there seems to be an interesting dynamic. Upon an untimely death, collaborators' pubs went down and newcomers' pubs went up. In that case, an alternative model of the situation could be "the old famous group of researchers had a certain capture/influence over publishing in the area that was broken by the untimely death of one of them."

In essence, I wasn't sure what to think because, as you pointed out, their direct collaborators were hurt. But it seems like the fields where a superstar dies also get an injection of new ideas. So I withheld judgment on what I thought might be happening because it felt up in the air.

But I'm open to hearing more evidence! I like being swayed. It's fun.

Yeah, that's certainly true, the deaths have interesting dynamics. My advisor (Christian Fons-Rosen) is a co-author on that paper with Pierre and Josh. I'm definitely interested in exploring the area more.

If one wanted to start flirting with how to disentangle the lost collaborator effect from the lost capture effect, do you think there are any decent ways to do that?

I imagine whatever it is will be imperfect. But maybe there's some pseudo-randomness to certain positions of status/power coming to an end that are independent from one's research capacity.

Like maybe you're only allowed to be the chair of x society or editor of y journal for a fixed time period and then you're forced to step down. Maybe something like that could be a codifiable measure of some level of capture of a field.

Maybe?

I think it's a great question. Two papers come to mind about capture that are somewhat related. These are not directly related but get at the capture part of research to some extent:

"Like maybe you're only allowed to be the chair of x society or editor of y journal for a fixed time period and then you're forced to step down. Maybe something like that could be a codifiable measure of some level of capture of a field."

I know some people who are working on something kind of like this. Happy to explore this further when we chat.

Thanks! I'll read them this weekend! Have a good weekend!