This is a link post for: https://dda.ndus.edu/ddreview/four-horsemen-of-technological-change-farmers-elevator-operators-coal-miners-bank-tellers/

Technology has changed the dynamic between labor and capital in the broader economy since the Industrial Revolution. The new steam and manufacturing innovations transformed large parts of the economy by explicitly changing the economics of labor and wages—and increasing inequality along the way. Ever since the early 19th century, when Luddites smashed looms out of fear that newfangled machines would decimate their wages and way of life by replacing specific labor, people have been fearful of technological change.

Technofuturists, however, have always dreamed of technology bringing us a brighter future. They envision a day when machines free workers from the drudgery of factory toil, of 10-hour workweeks, and more leisure time to explore their creativity and forge human connections.

Who is right? With the accelerating speed of AI—the development and deployment of which promise to be as disruptive as looms of old, if not more so—we will find out sooner rather than later. Just this year, new tools, such as Google’s LaMDA and OpenAI’s DALL-E and ChatGPT, have demonstrated astounding capabilities, ranging from convincing chat conversations to acceptable text prose to mind- warping images that delight and disquiet.

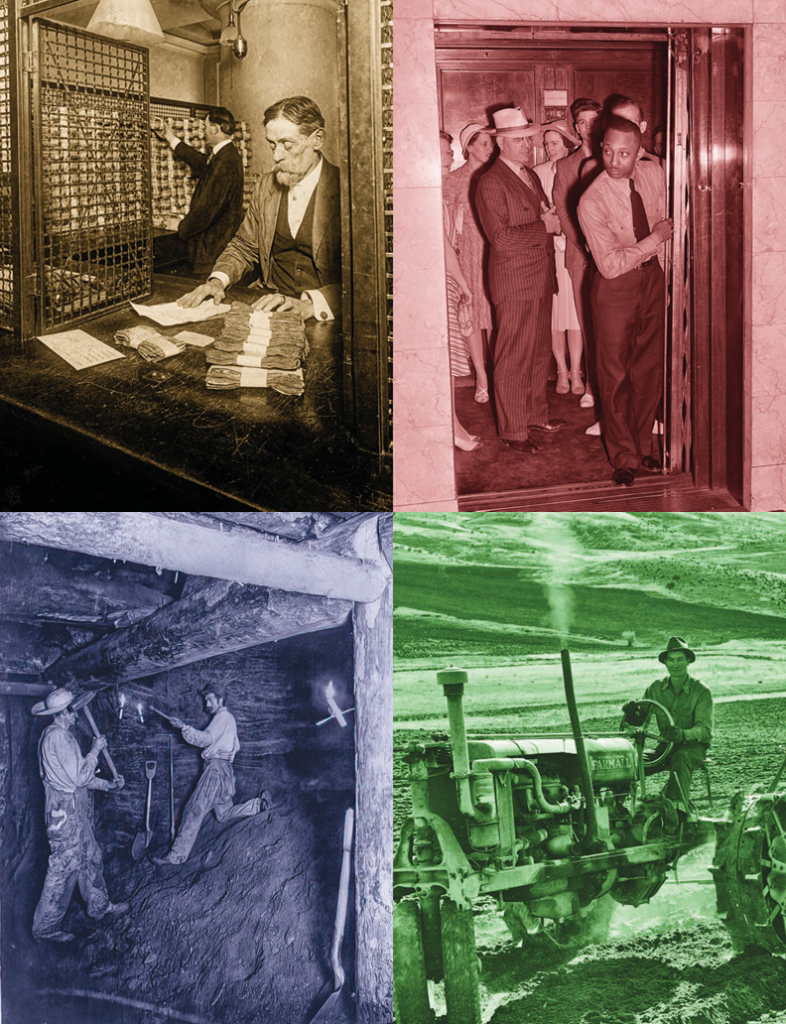

But before we look to the future, we should consider the past. History shows us four examples of change brought about by labor-replacing technology. And so, the question looms: Will the future of workers today resemble that of farmers, elevator operators, coal miners or bank tellers?

Farmers

Farming was once the basis of every economy. Thomas Jefferson considered farmers in our sparsely populated country as the nation’s soul and its future, arguing at the birth of the republic that “[s]uch is our attachment to agriculture, and such our preference for foreign manufactures, that be it wise or unwise, our people will certainly return as soon as they can to raising raw materials and exchanging them for finer manufactures than they are able to execute themselves.” [1] This remained the case for a long time. In 1850, farming accounted for half of all American jobs, but by 1980, due to technological change, that fell to only 4 percent.[2] Since then, the proportion of agricultural workers has levelled off at 1 percent.[3]

Agriculture is one of humanity’s first technologies, along with fire, domesticated animals and spears. To this day, new technology drives efficiency with inventions such as machine vision satellite imagery, GPS-automated tractors, the Haber-Bosch process and pesticide-resistant GMOs. These technologies don’t reduce the value of farming. In fact, it is the opposite; they allow for much more value to come from much less labor, creating surplus value that can be enjoyed and invested in other areas of the economy. Though agriculture has always been a field of innovation, from forming the basis of the city-state to the invention of crop rotation, for millennia it was a labor-intensive business that consumed the majority of the workforce. Industrialization supercharged that, turning farming into just one part of a largely urbanized economy.

Historically, technological innovation powered the Industrial Revolution and made cities dominant manufacturing centers. From 1859 to 1929, America’s industrial output increased 28 times, and not coincidentally, from 1850 to 1920, America went from 15.3 percent urban to more than half urban.[4] Today, only 21 percent of Americans live in rural areas.[5] This type of huge, secular change driven by technology is rare, not in the sense that technology frequently causes societies to change but in the sense that very few technologies are able to form a platform for over a century of downstream inventions. When it happens, people eventually adjust due to generational change and economic incentives. Like any long-term change, it is not always a straight line, but because it is so gradual and the impacted industries so huge, people have time to acclimate to the new reality. Even a state such as North Dakota, which is nearly twice as rural as the rest of the country, has a nearly 60 percent urban population,[6] which a century ago would have exceeded even the most urban countries in the world.

Elevator Operators

Unlike farming, not all technological change and labor shifts are gradual. Consider the elevator—with 6,818 vertical miles of elevators installed in the U.S. and Canada[7]—even today one of the most widespread forms of public transportation. Elevators existed for centuries, but in 1852, Elisha Otis’s automatic brake convinced people they were safe to use, especially for skyscrapers. As a result, use skyrocketed, and with it, the number of elevator attendants.

Today elevator operators have all but disappeared—there are so few that, other than specialized industrial roles like grain operators, the job is not even tracked. But at the time, they were required to, as the name suggests, actually operate the elevator, controlling everything from the speed of travel to even manually opening doors. Though automated elevators debuted in 1900, elevators still required attendants because users distrusted the machine. Due almost entirely to user demand, the number of elevator attendants increased to over 90,000 in the 1940s, according to the U.S. Census. That all changed in 1945 when New York City elevator attendants went on strike for more pay. That was the beginning of the end. By 1950, the first census after the strike, employment of elevator attendants had flatlined, and by 1960 automated elevators were becoming ubiquitous, and attendants’ jobs were on the way out.[8]

It took a PR campaign and some psychology to make people like driverless elevators (why do you think elevators used to have a voice that calls out each floor?), but automating elevators made them smaller and safer. They were simply better, so once people realized they were safe, no one mourned the elevator operators. People considered elevator operator jobs “low grade,”[9] and many of these operators moved to better jobs given the upward mobility at the time. By 1965, it was almost impossible to find working elevator attendants. That said, it is remarkable that it took more than 50 years to go from a technology’s perfection to the mere beginning of its adoption curve. But once it started, the change was rapid considering the physical infrastructure buildout that was needed.

Coal Miners

Coal mining employment peaked in 1923 with 862,536 miners and has steadily declined ever since. In 2016, there were only 81,484 coal miners, marking the first year on record this number fell below six figures. Now there are only 61,402 coal miners.[10] Given the long length of time over which this has occurred, technological change—more than environmental regulation—is what has been killing coal mining jobs. Coal competes with other forms of energy, such as natural gas, wind and solar. As such, the coal industry has invested in technology for nearly a century to reduce the labor intensity of coal mining to cut costs and remain cheap.

But even that has not been enough in the face of competition from alternative energy sources such as natural gas, powered by the fracking revolution and solar, which has benefited from Wright’s Law, or the idea that costs decrease as production increases and an industry “learns.”[11] For example, solar is approaching prices cheaper than coal in some places, according to Bloomberg,[12] (though it cannot provide baseload power and relies on complementary power sources like natural gas, nuclear and batteries). Ironically, according to an American Enterprise Institute estimate, solar is 79 times more domestically labor-intensive per megawatt hour than coal, making it a net job creator.[13]

Yet, coal-centric regions have been decimated. States such as West Virginia are among the poorest in the U.S., but even within states, like Pennsylvania, coal regions are some of the poorest counties (in fact, none of the five “Coal Region” counties have per capita income above Pennsylvania’s average). This is not a uniquely American phenomenon. Across the Western world, large, historic coal regions are among the poorest in their countries, like Germany.

Something has broken down. Unlike in our previous parables, coal country did not rejuvenate. Coal’s fade has occurred over a long period, just like farming, but coal country failed to adapt. Unlike farming, people did not move to new energy industries, nor did those industries come to them. Coal production acquired massive technological improvements that reduced labor intensity, like elevators. Yet, new jobs did not materialize to take advantage of the newfound productive wealth and newly free labor even though alternatives (that could piggyback off at least some of the previous infrastructure investments) were available. Though many of these coal regions are rural, they are already part of the supply chain and have cheap land and labor that could be used for, say, manufacturing. And while technological improvements in coal drove the cost down and managed to increase demand for decades, it was not enough to stop the bleeding.

Coal demand peaked internationally in 2013, according to the International Energy Agency’s Coal 2020 report,[14] so there was not enough value created to offset the losses. Coal regions have the wealth and human capital to compensate by building natural gas refineries, or new nuclear plants, or solar manufacturing, but didn’t—the interesting question is, why.

So, what isn’t working? Like many natural resource- based industries, it has been difficult for coal to adapt. The key is culture. Coal mining historically involved dedicated populations, which created a culture[15] that melded community and identity. Because the decline of coal mining has been slow-moving and culturally entwined, this has led to a sense of despair and lack of belonging stemming from a sense of betrayal. This has resulted in the interesting phenomenon of coal country powering populists throughout the Western world, like Brexit, Marine Le Pen, Donald Trump and even Geert Wilders in the Netherlands, all of which put up some of their best vote margins in coal-dominated regions that have embraced populism because of this sense of betrayal in an attempt to grasp onto hope. Combine that with issues of economic mobility, like difficulty moving and job-retraining programs with poor records of success, and it’s no wonder coal country, with its large sunk costs in fixed assets, has had difficulty adjusting.

States such as North Dakota, where a mere 15,000 out of 410,000 employees are in lignite coal,[16] which is amazingly the same number as in 1910,[17] have fared better because coal was never as dominant.

Displaced coal workers were absorbed into other energy sources or even other industries.

Bank Tellers

Not all technological change results in the destruction of jobs, as with elevator operators; some, like ATMs, increase the number of jobs.

Arguably, ATMs were the first large-scale commercial application of artificial intelligence. Interestingly, ATMs are also arguably the first successful commercial applications of machine vision, which counters claims that AI necessarily destroys jobs. Though the first ATMs were chemistry based, Yann LeCeun’s back propagation technique[18] allowed for optical character recognition to be widely used for reading checks in the 1990s, with the first commercial deployment in 1994.

When ATMs began appearing in the late 1960s, many commentators predicted they would eliminate bank tellers. This was seen as inevitable; ATMs were cheaper, faster and worked 24/7. They were popular with customers from the start and spread rapidly thanks to new technologies[19] once the shared ATM was developed (beforehand, ATMs were only usable within one bank’s networks),[20] with a massive acceleration starting in the 1990s due to a big decrease in the cost of deployment.[21] Nonetheless, since the introduction of ATMs, the number of bank tellers has steadily increased from 300,000 in 1970 to 600,000 in 2010. Though the number of bank tellers per branch decreased from 21 to 13, it became cheaper to operate a branch, so banks opened more of them. In 2020, the number of bank tellers finally decreased for the first time, not due to ATMs but the internet as banks finally started closing branches.

Furthermore, tellers adjusted their jobs around their computerized colleagues.[22] The ATM-bank teller example is one of tech’s favorite counterpoints to those who oppose technological progress. It is a fantastic story of what are called “centaur systems,” or the observation that in many contexts, AI and humans working together outperform either one separately by focusing on their strengths (as in chess, which is the namesake of this type of collaboration). In economic terms, this type of capital-labor substitution revealed a complementarity. Humans are better than machines at selling. By making bank branches cheaper to operate, they transformed into high-powered sales centers where humans intervene only for more complex transactions or to upsell more profitable services.

The Paths Forward

What we can learn from these four archetypes of technological change is this: Whether a new technology appears suddenly or gradually, as long as the economy is growing over decades (even with periodic recessions as at risk today), a soft landing is possible. Farmers took the new jobs created by the urbanization their technology enabled, elevator operators found new jobs because they were in a fluid economic environment, and bank tellers specialized to work with their ATM partners because a growing economy meant more demand for financial services. But we need to communicate the benefits of new technologies and start repurposing that newfound productivity immediately for future industries to make that happen. Older industries often try to resist, so we need to make people want to work in the new industries by making sure the new economy’s onramps maintain workers’ dignity and use their skills as much as possible. For coal miners, for example, this would mean job training programs[23] that widen the aperture of cultural pride from providing coal to providing energy, even in the energy forms that will win the future. It also means reusing physical infrastructure when possible, such as the recent Berkshire Hathaway effort to convert a West Virginia coal plant into a nuclear power plant.[24]

This brings us back to AI. The coming wave of AI will be swift, and tools from DALL-E to ChatGPT to LaMDA to Github Copilot are coming for white-collar jobs first—the types of jobs that are truly digital and require little or no capital expense to do. The past six months alone have seen a tidal wave of progress with more to come. This change is coming faster than we think, from AI graphic arts to fully robotic “lights out” car manufacturing. We will face more change than we have faced in hundreds of years, and if we don’t learn from the past to think about the future, it will get messy. It won’t be a few thousand coal miners wondering what they’re going to do for work; it will be millions of workers. We need to be nimble so that when the new jobs come around that look different than what work looks like today, we know what to even retrain for.

We are not passive actors unable to influence the social conditions surrounding technological change, and that is what will make the difference. We don’t yet know which technologies will fall into which buckets, but there are lessons we can learn from the history of industrialization that can change how we are affected by those technologies. The key differentiators between the optimistic and pessimistic technological obsolescent futures are escape valves and creating value faster than it gets destroyed, so that there are more goods and services to enjoy and invest in, as with farmers, elevators and ATMs.

AI’s immediate future involves creating software that can respond to unstructured queries and create surprisingly good work in highly constrained domains and conditions. Though some leaders and technologists, such as Alibaba founder Jack Ma, predict “decades of pain” from the transition,[25] it need not be so. It is crucial not to ask “dumb AI” to do too much,[26] meaning that we should not ask AI systems to go beyond their narrow domains nor deploy them beyond the capabilities they actually have—versus the ones we wish they had. Rather, we should useAI to augment humans and use humans to augment the work of AI systems. The best machines will often not replace people in factories but will allow them to increase productivity by doing specific steps better and safer, while even giving us back some free time and allowing Western countries to reshore manufacturing.

It is also critically important to keep track of the industries that complement AI-heavy workflows, so displaced workers can adapt quickly and, if need be, move into new industries or rapidly expanding industries that can absorb the excess labor. We cannot guarantee that the future will look like ATMs, because so much depends on the technology itself. But we can increase the likelihood of that future by embracing it and deciding, collectively, to adapt. Banks chose not to minimize costs but to maximize profits, which meant instead of eliminating jobs the second they could, they repurposed them into higher-value functions. As a society, we should take the same tact. It is also critical to recall that this change occurred in a regulatory environment that did not penalize the banks for changing the nature, scope and quantity of jobs in response to technological change. Though government will have a role in helping those who fall behind, these four technologically challenged horsemen show that flexibility in society at large is essential for a successful transition.

The future of AI is coming fast, and it is coming strong. But take solace in this: Farmers, elevator operators, bank tellers and coal miners are not unique. These are merely examples symbolizing countless other professions that have been disrupted over time by new technologies. Just like these stand-ins, the workers of the multitude of historical industries adapted too. For all the generational tumult that whole economies can face, humans adapt and survive. Unlike our ancestors, we have the benefit of learning from history to enjoy the fruits of technology without the pain.

What a great article! I think it's so important to have specific historical examples of how technology impacted jobs, vs. speculating in a vacuum. Your emphasis on culture and dynamism is especially on point:

"For coal miners, for example, this would mean job training programs[23] that widen the aperture of cultural pride from providing coal to providing energy, even in the energy forms that will win the future. It also means reusing physical infrastructure when possible, such as the recent Berkshire Hathaway effort to convert a West Virginia coal plant into a nuclear power plant.["

Focusing on the upside or "widening the aperture" of work people consider will matter a lot, as will just being open to change (vs. using regulation to stop it before we even fully understand it).

This also reminded me of a study that analyzed the impact of AI on taxi drivers. The key finding was that AI helped less experienced/less skilled drivers be more productive--rather than replacing drivers wholesale: ". We find that AI improves drivers’ productivity by shortening the cruising time, and such gain is accrued only to low-skilled drivers, narrowing the productivity gap between high- and low-skilled drivers by 14%. The result indicates that AI’s impact on human labor is more nuanced and complex than a job displacement story, which was the primary focus of existing studies."

https://docs.iza.org/dp15677.pdf

Thanks for sharing this article here.